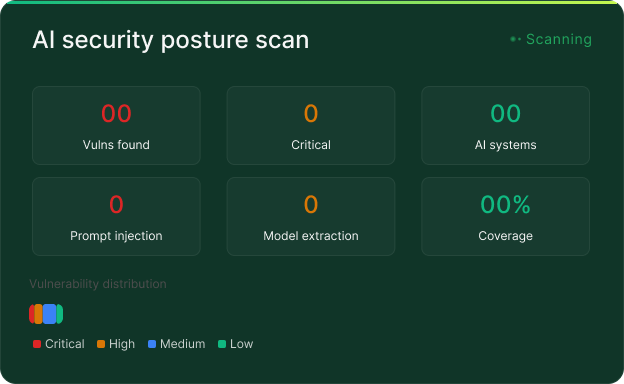

AI cybersecurity posture

assessment: identify and

eliminate active AI

vulnerabilities

Identify exploitable vulnerabilities in your production AI systems before adversaries do. Expert-led adversarial testing across all AI-specific attack vectors, non-disruptive, technology-agnostic, audit-ready.

15-25

avg vulns found

10–100x

cost savings vs breach

$ 4.45M

avg breach cost

- EU AI Act

- NIST AI RMF

- OWASP Top 10 for LLMs

- MITRE ATLAS