Why traditional FinOps fails for AI workloads, and what to build instead.

Executive Summary

As enterprise AI adoption accelerates, organizations are discovering that traditional FinOps practices are insufficient for managing AI-driven cost structures. While cloud infrastructure costs scale predictably with provisioned resources, AI costs scale with behavior — prompts, tokens, retries, routing decisions, AI agent execution loops, and model selection.

Most AI cost management today is retrospective. Dashboards report what has already happened. Alerts trigger after spend has occurred. Finance reviews bills after exposure is locked in. This model does not provide control.

AI FinOps must evolve from passive reporting to active enforcement, operating as a control plane embedded inline at the point of execution, where behavioral decisions translate into financial outcomes.

1. The Structural Problem: AI Spend Is Behavioral, Not Infrastructural

Cloud FinOps was built on a set of assumptions that held true for traditional infrastructure: predictable cost units, linear scaling with usage, native tagging and resource attribution, slow-moving pricing models, and centralized governance. AI systems violate every single one of these assumptions — and that is why AI FinOps requires its own discipline.

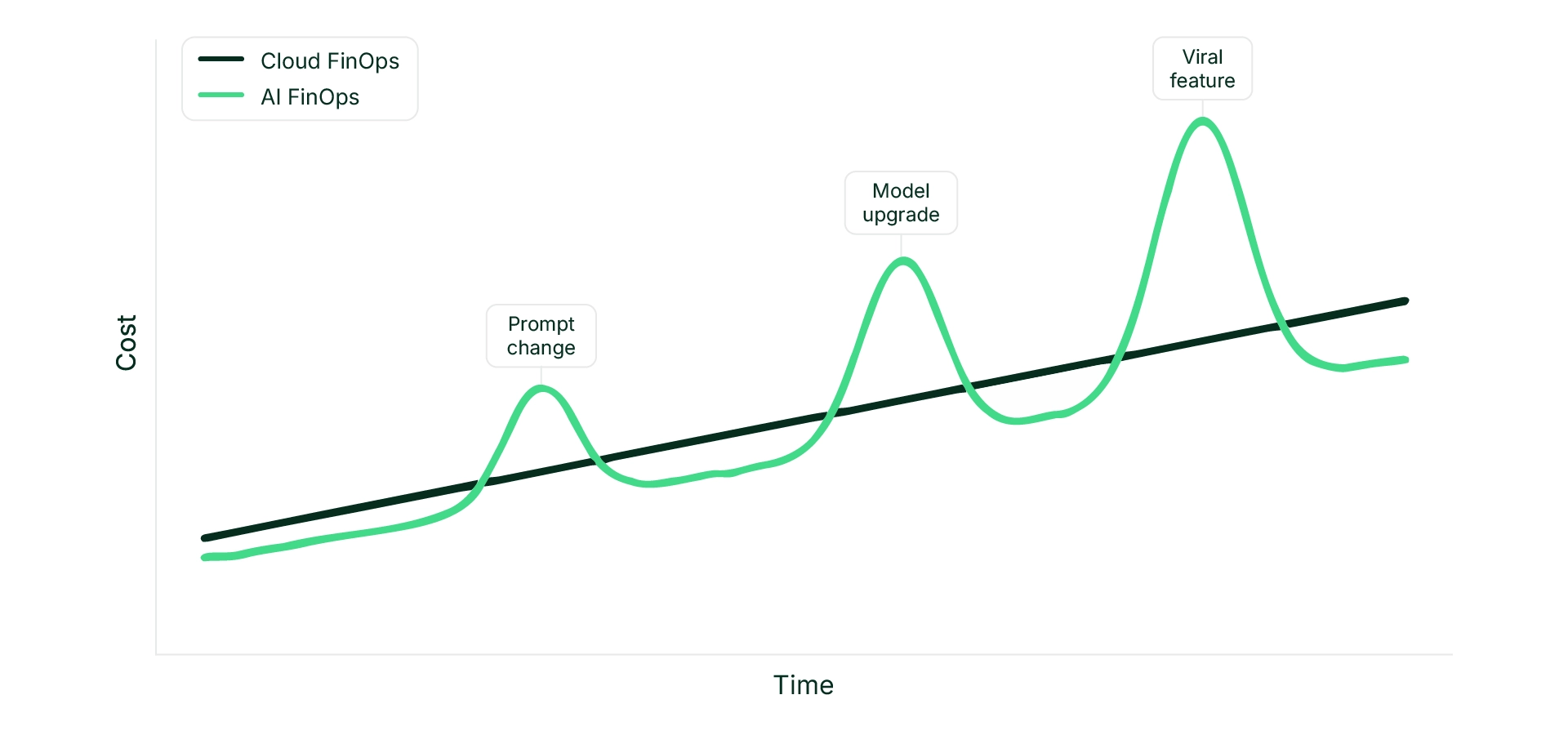

Image: Why AI Spend Feels Sudden

AI costs are driven by an entirely different set of forces: prompt length and context construction, model selection and routing logic, retry behavior and fallback chains, agent loops and orchestration depth, retrieval and embeddings, and concurrency amplification. Small configuration changes can produce disproportionate cost shifts. A slightly longer prompt increases token consumption. A model upgrade multiplies inference cost. Retry logic doubles execution volume. Agent chaining introduces compounding effects.

The result is non-linear cost behavior. And the data confirms it: in AI workloads, model price variance can range from 15–30× across providers, with frequent changes. Shared API keys and poor attribution make it nearly impossible to trace spend back to the feature or team that caused it. Shadow AI adoption is decentralized. And because AI is probabilistic, producing different outputs for the same inputs, even identical workloads can generate unpredictable cost profiles.

This is not an operational anomaly. It is intrinsic to how modern AI systems function.

Cloud FinOps assumes variance is negligible. For AI, variance is inevitable.

2. Why Dashboards Fail to Provide Control

Most organizations manage AI costs using provider dashboards, monthly reconciliation reports, budget alerts, hard usage caps, and manual tagging in spreadsheets. These cloud cost optimization tools share a common and critical limitation: they operate after execution.

By the time spend appears in a dashboard, the API call has already been made, the tokens have already been consumed, the retry has already occurred, and the agent loop has already amplified usage. At that point, mitigation is purely reactive. Teams may throttle usage, downgrade models, or impose blunt restrictions, often disrupting production systems or degrading user experience in the process. What they need instead is an AI-driven FinOps agent that enforces governance inline, before cost is incurred.

FinOps Dashboards provide visibility. They do not provide governance. And visibility without enforcement does not change behavior.

Industry data confirms this pattern: the majority of companies still rely on native cost monitoring tools, manual spreadsheet tracking, or third-party consulting to manage AI costs. A meaningful percentage have no formal system at all. The tooling gap is real, and the consequences are mounting.

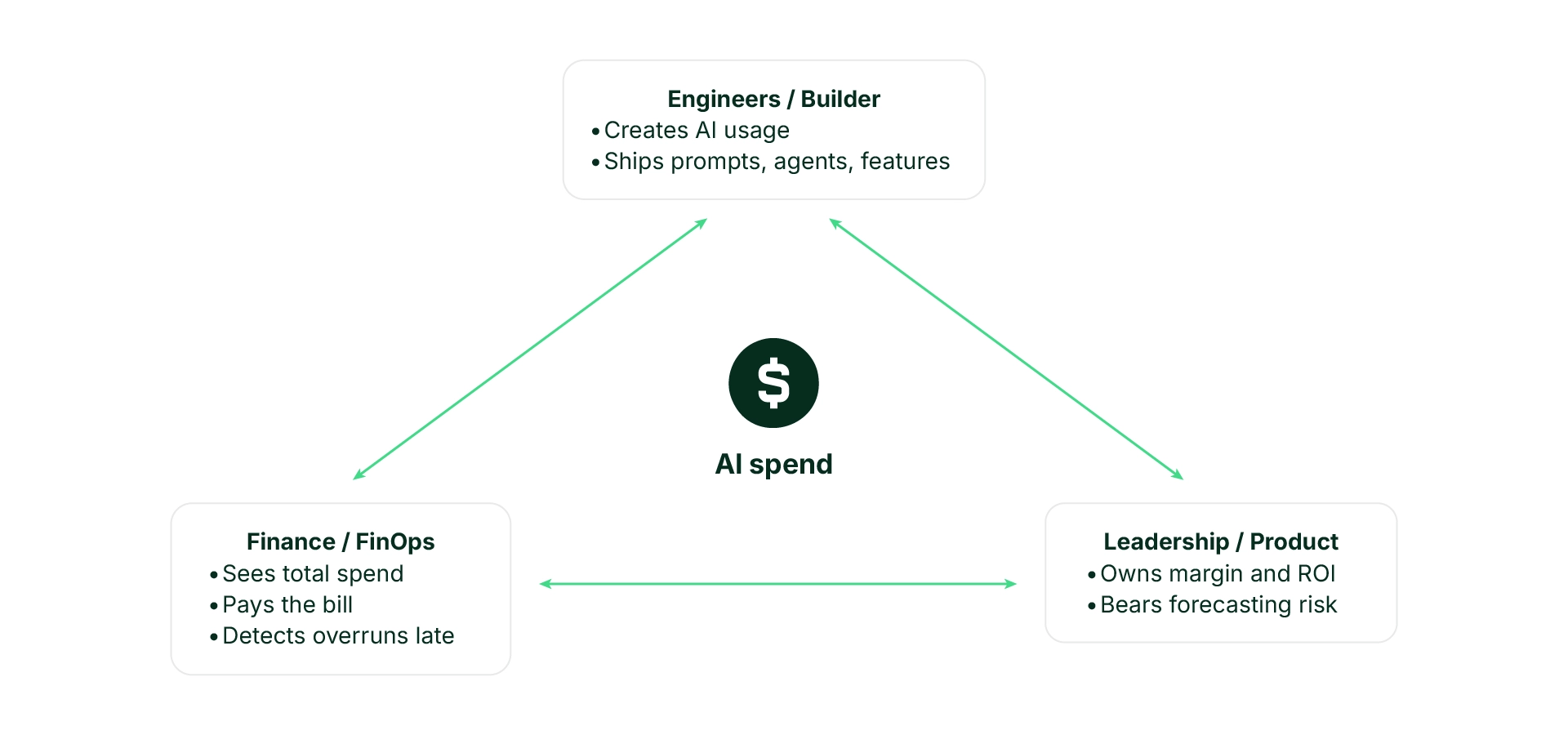

3. The Organizational Gap: Who Spends Is Not Who Feels It

AI spend introduces another structural issue that pure tooling cannot solve: misaligned accountability across the organization.

Engineers create AI usage: they ship prompts, agents, and features. Product leaders own margin and ROI: they bear forecasting risk. Finance sees total cost and budget variance: they pay the bill and detect overruns late.

Image: The People Who Spend AI Money Are Not The Ones Who Feel It

Cloud cost optimization rarely occurs at the moment of decision. When a developer selects a larger model, increases context length, or adds retry logic, the financial consequences are invisible in that workflow. When finance detects overspend weeks later, the behavioral cause is difficult to trace.

The system is fragmented: Decision → Execution → Billing → Investigation → Mitigation. By the time mitigation occurs, cost exposure is already realized. This is not a tooling problem. It is an architectural problem — one that requires aligning engineering, finance, and business around shared cost accountability.

When spend is created, paid, and owned by different teams, optimization never happens at the point of decision.

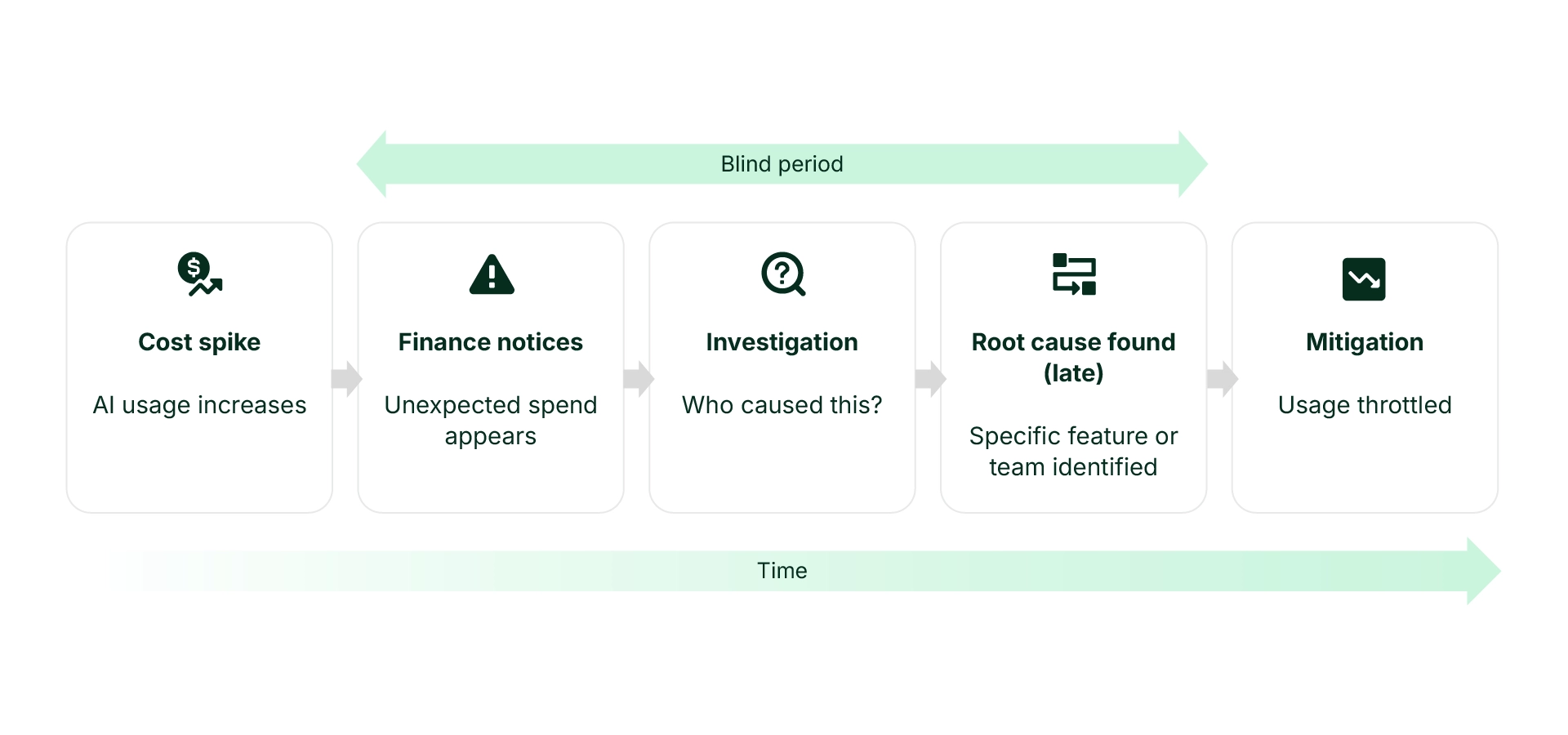

4. When AI Costs Spike, Companies React Blindly

The typical enterprise response to an AI cost spike follows a predictable and painful pattern:

- Cost spike: AI usage increases due to a prompt change, model swap, or agent loop.

- Finance notices: Unexpected spend appears in a cloud/AI bill review, with no feature-level visibility.

- Investigation: Shared API keys and missing tags turn root-cause analysis into forensic work.

- Root cause found (late): A specific feature or team is identified after digging through logs, spreadsheets, and internal interviews.

- Mitigation: Usage is throttled, but business impact has already occurred.

Image: When AI Costs Spike, Companies React Blindly and Panic

In a direct user interview, a senior engineering leader at a major enterprise described exactly this pattern: an AI feature drove rapid OpenAI API usage growth, with AI costs surfacing only after scale. The entire cycle from cost spike to mitigation played out in what amounted to a “blind period” where the organization had no real-time insight into what was happening.

By the time action is taken, cost is already sunk.

5. Visible vs. Opaque Cost Drivers

Part of what makes AI cost management so challenging is that the most important cost drivers are the ones teams cannot easily see.

Visible cost drivers total API calls, total tokens by model, GPU hours, and obvious step-changes like model upgrades, show up in provider dashboards.

Opaque cost drivers prompt length and verbosity, retries and agent loops, overkill model selection for simple tasks, caching gaps, and data pipelines tied to AI workflows — are where the real cost multipliers live.

The most important AI cost drivers live below the billing layer and are invisible without fine-grained tracking. Without behavioral visibility, cost optimization efforts focus on the wrong layer entirely.

6. The Seven Layers of AI Cost And Why They Compound

AI cost structure is not a single line item. It is a stack of seven interacting layers, each of which can multiply the next:

- Data preparation and upkeep

- Retrieval and memory access

- Context construction

- Model execution

- Orchestration and retries

- Parallelism and concurrency

- Evaluation, monitoring, and guardrails

The most important thing about these seven layers is that they interact. A retrieval inefficiency inflates context. Inflated context increases inference cost. Higher inference cost encourages routing to smaller models, which increases error rates. Errors trigger retries. Retries increase concurrency pressure. Concurrency pressure forces overprovisioning. Overprovisioning raises baseline cost.

What starts as a minor retrieval issue cascades into a fundamentally different cost profile. And the hidden costs of enterprise AI adoption, agent amplification (5–20× multiplier), RAG economics, SaaS AI embeds, and compliance overhead, often dwarf visible inference spend.

RAG and agent costs can exceed AI inference spend at scale.

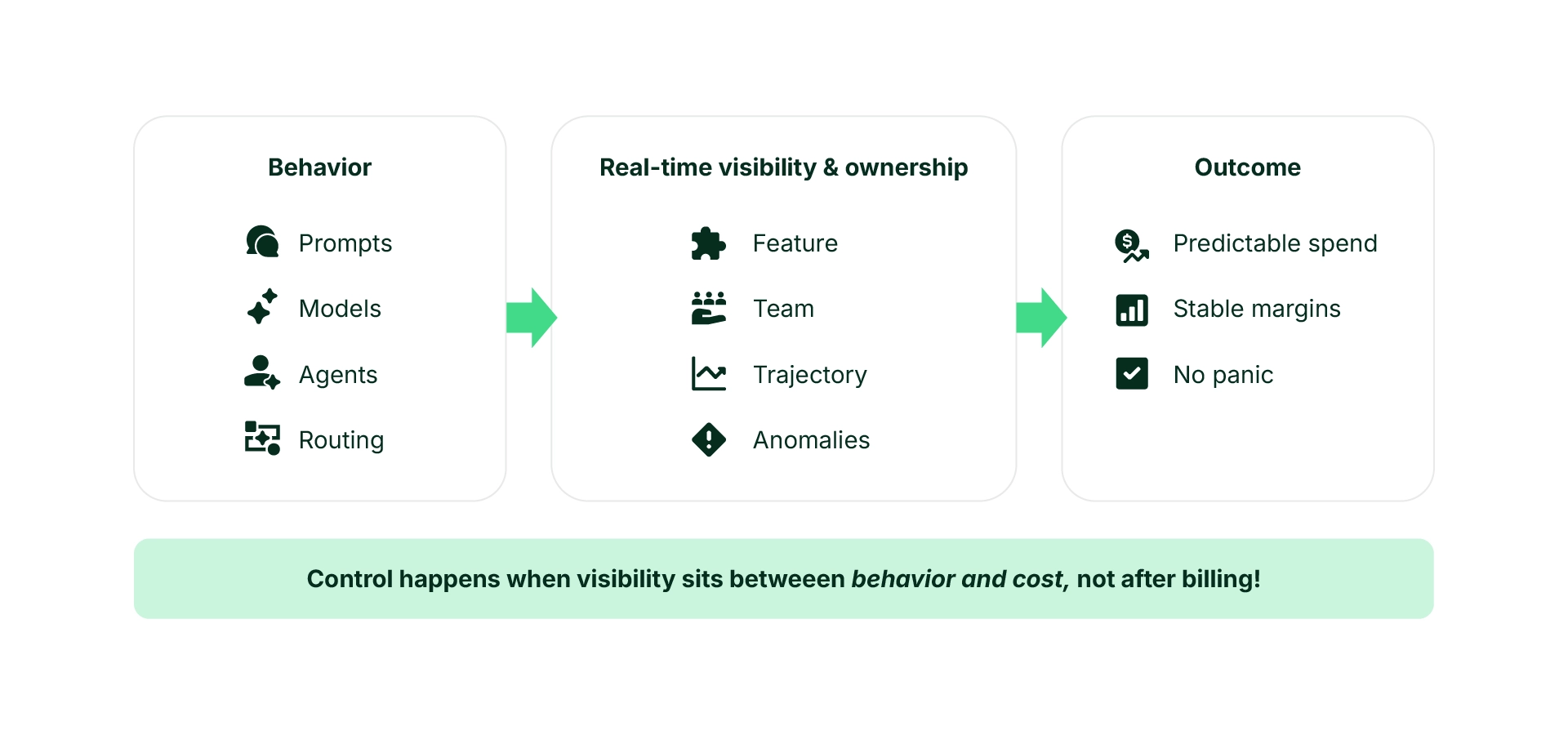

7. What Real AI Cost Control Requires

Effective AI FinOps must operate before cost is incurred, not after it is reported. That requires five structural shifts:

1. Execution-Level Attribution

Every AI execution must carry mandatory attribution: team ID, feature or service name, user or cost center, model selected, and retry behavior. Attribution cannot rely on manual tagging discipline, it must be enforced at ingress. Without granular attribution, root-cause analysis becomes forensic work. Gruve’s FinOps Cloud Cost Agent addresses this by building a FOCUS-native cost lake that standardizes allocation across all cloud and Kubernetes spend.

2. Inline Policy Evaluation

Security, compliance, and financial policies must be evaluated before the model call is executed. This includes budget verification, spend velocity checks, user authorization, data sensitivity policies, and model routing constraints. If checks fail, enforcement actions must occur immediately, degrade, reroute, throttle, or block before cost is incurred.

3. Spend Velocity Monitoring

Static budgets are insufficient for AI systems. AI workloads can spike within minutes due to prompt modifications, increased concurrency, agent amplification, or traffic bursts. Monitoring ΔCost / ΔTime – spend velocity – enables early detection of abnormal acceleration patterns. Guardrails can be applied before bill shock occurs, using rolling windows at the second, minute, and hour level to compare baseline versus current velocity.

4. Behavioral Visibility

True cost drivers often exist above infrastructure: overly verbose prompts, inefficient context construction, redundant retries, overpowered model selection, and retrieval inefficiencies inflating context. Optimization requires visibility into behavioral drivers, not just aggregated token totals.

5. Embedded Governance

Governance must live inside engineering workflows, not outside them. If cost visibility and enforcement are disconnected from development and deployment processes, teams will optimize for latency and quality while finance absorbs volatility. AI cost management must sit between behavior and billing.

Image: What Real AI Cost Control Looks Like

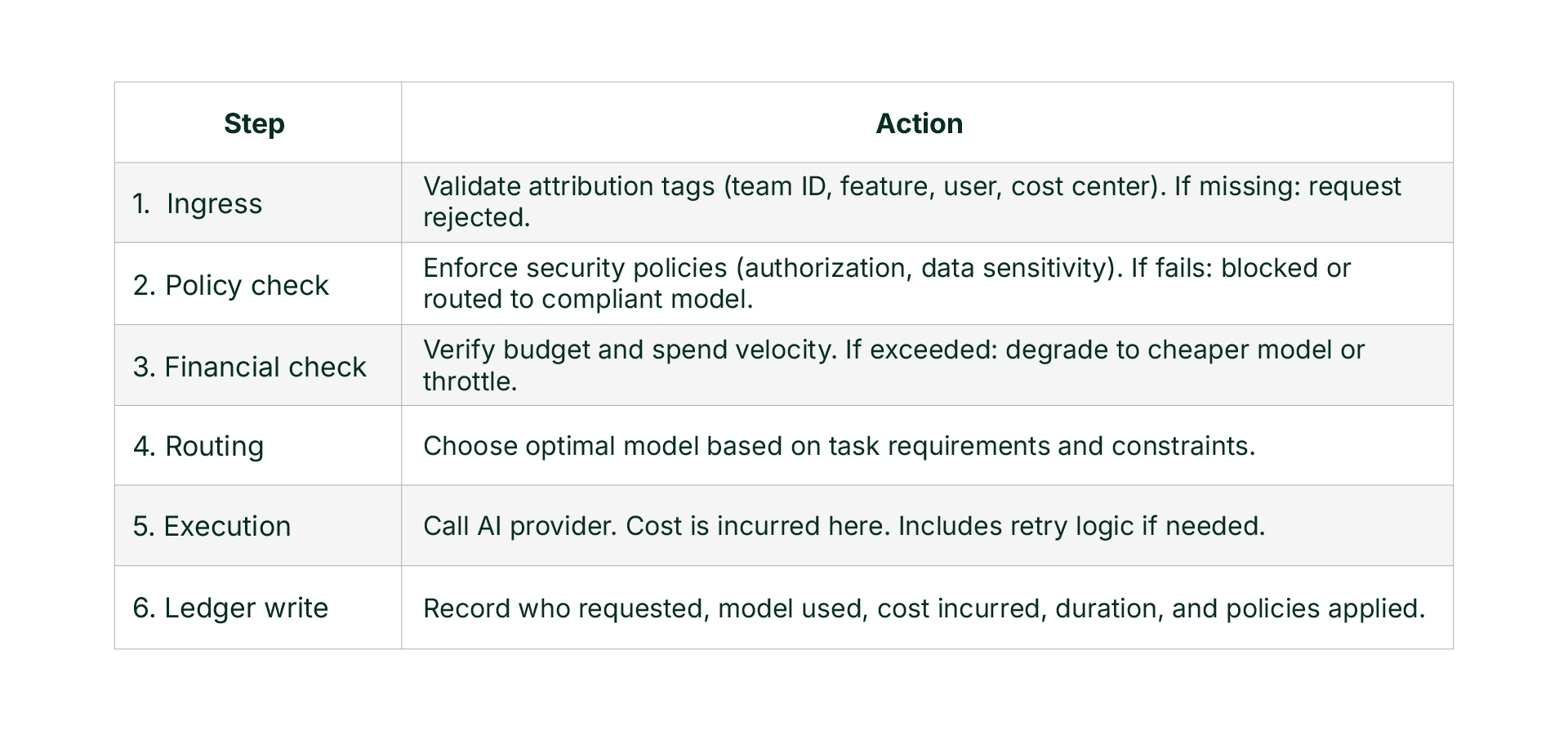

8. From Reporting Layer to Control Plane

A reporting dashboard answers: “What did we spend?”

A control plane answers: “Should this execution proceed, and under what constraints?”

The architectural difference is significant. An AI control plane operates as a gateway through which all AI traffic flows. It validates attribution at ingress, enforces security and compliance policies, verifies budget and spend velocity thresholds, selects optimal model routing, records execution metadata and cost, and writes to a canonical usage ledger.

Cost governance becomes proactive rather than retrospective. Policy becomes enforceable rather than advisory. Ownership becomes automatic rather than manual.

The AI Gateway: A Six-Step Enforcement Pipeline

Any execution that bypasses this pipeline is out of policy:

Three key principles underpin this architecture: all AI traffic flows through the control plane, policies are evaluated before API calls, and there is a single source of truth.

9. This Is Not About Cost Cutting

AI FinOps is frequently framed as a cost optimization exercise. This framing is incomplete. The core objective is control.

Without real-time governance, margins become volatile, forecasting becomes unreliable, vendor negotiations lack transparency, autonomous agent adoption becomes risky, and executive confidence erodes. As organizations scale toward multi-model architectures, agent-based systems, and retrieval-augmented pipelines, cost variance increases. Without a control layer, that variance becomes structural business risk.

AI FinOps is less about cutting costs, and more about restoring control before AI spend becomes a structural business risk.

10. Implications for AI Pricing

The control plane argument has direct implications for how AI products should be priced. Because AI variance is inevitable, pricing must be designed for worst-case behavior, not average behavior.

Four pricing models emerge for AI products, each suited to different conditions:

- Usage-based pricing works when users understand cost drivers and value immediate efficiency.

- Hybrid pricing combining a base fee with usage overages, suited for environments where users need freedom to explore and costs vary widely.

- Outcome-based pricing aligns incentives when outcomes are clear, narrow, and reliable.

- Capacity-based pricing applies when concurrency, latency, or availability drives cost more than volume.

Choosing the right model requires answering five questions: Where does cost variance usually come from? Can users understand and control those costs? Does value emerge through exploration or execution? Does concurrency matter more than volume? And how stable is the system today? The goal is to force alignment between system behavior, user psychology, cost variance, and business survivability.

11. Conclusion: Dashboards Report. Control Planes Govern.

AI systems introduce behavioral cost dynamics that traditional cloud FinOps frameworks were not designed to handle.

If cost management remains retrospective, AI volatility will continue to manifest as surprise bills, reactive throttling, and fragmented accountability.

If governance moves inline — at the moment of execution — organizations regain predictability, accountability, and margin stability.

AI FinOps is not a reporting problem. It is an architectural one. And it requires a control plane.