Closing the AI Governance-Assurance Gap in Regulated Industries

Enterprise AI is no longer experimental. According to multiple industry analyst estimates, over 70% of organizations now deploy AI at scale. AI is also in production across every major regulated industry: processing insurance claims, supporting clinical decisions, scoring credit risk, and generating content for government-facing systems.

Yet most organizations that believe they have AI governance covered are operating with a dangerous blind spot. They have policies, committees, and frameworks. What they lack is the operational evidence to prove those policies are being followed. The risks differ depending on whether AI generates content or makes consequential decisions, but both require evidence-based assurance. We refer to this as the AI Governance-Assurance Gap: the distance between documented AI policies and the operational evidence required to prove those policies are being followed in production systems.

According to industry analyst research, organizations that deploy AI governance platforms are 3.4 times more likely to achieve high effectiveness in their AI programs. Yet only 14% of enterprises enforce AI assurance at the enterprise level. The governance structures exist on paper, but the operational plumbing to make them work in production does not.

The Gap Across Regulated Industries

The AI Governance-Assurance Gap is not unique to any single sector. It shows up wherever AI is used in decisions that affect customers, patients, or citizens, and where regulators expect organizations to show their work.

Insurance

AI adoption in insurance is accelerating across underwriting, pricing, claims, and fraud detection. More than twenty states have adopted the NAIC Model Bulletin on AI, increasing expectations for governance, risk management, and safeguards against unfair discrimination. Insurers using AI models and external data face scrutiny under state trade practices laws and emerging requirements to demonstrate that algorithmic decisions do not produce biased outcomes. Regulators are also advancing structured AI evaluation frameworks, raising the compliance bar for carriers without centralized oversight of models and vendors.

Healthcare

AI adoption in healthcare is accelerating, with 46% of U.S. healthcare organizations now implementing generative AI. Yet the vast majority of medical AI is never reviewed by a federal or state regulator. New state laws in California, Texas, and Illinois now require disclosure when AI is used in clinical decisions, and several states are restricting AI use in insurance claims and prior authorization. HIPAA adds another layer: any AI system processing protected health information must meet Security Rule requirements, and healthcare organizations remain liable for their vendors’ AI systems.

Financial Services

The SEC’s 2026 examination priorities have elevated AI and cybersecurity above cryptocurrency as the dominant risk focus. Financial institutions using AI for credit scoring, fraud detection, and trading face scrutiny under fair lending laws, CFPB oversight, and model risk management expectations. Cyber insurers are increasingly conditioning coverage on documented evidence of AI-specific security controls and alignment with recognized risk management frameworks.

Government Contracting

Federal agencies are expanding AI procurement while raising the bar for governance. The White House’s AI Action Plan emphasizes objective, bias-free systems in public-sector applications, and contractors face requirements around content provenance, explainability, and export controls. The ability to demonstrate NIST AI RMF compliance and produce audit-ready documentation is increasingly a procurement prerequisite.

Regulatory Crosscurrents: State Action, Federal Uncertainty

Dozens of new state AI laws were enacted in 2025, and the trend is accelerating. Meanwhile, a December 2025 Executive Order seeks to preempt state AI laws deemed “onerous” through an AI Litigation Task Force and federal funding restrictions. The Colorado AI Act’s effective date was pushed to June 30, 2026. The EU AI Act reaches full enforcement for high-risk systems in August 2026.

For enterprises, the practical takeaway is clear: regardless of which level of government prevails, the underlying obligations persist. Insurers still cannot discriminate. Healthcare organizations still must protect patient data. Financial institutions still must explain consequential decisions. State attorneys general retain enforcement authority under existing consumer protection and civil rights statutes. Organizations that pause governance efforts in anticipation of deregulation are taking on significant risk.

Governance and Assurance: Two Halves of One System

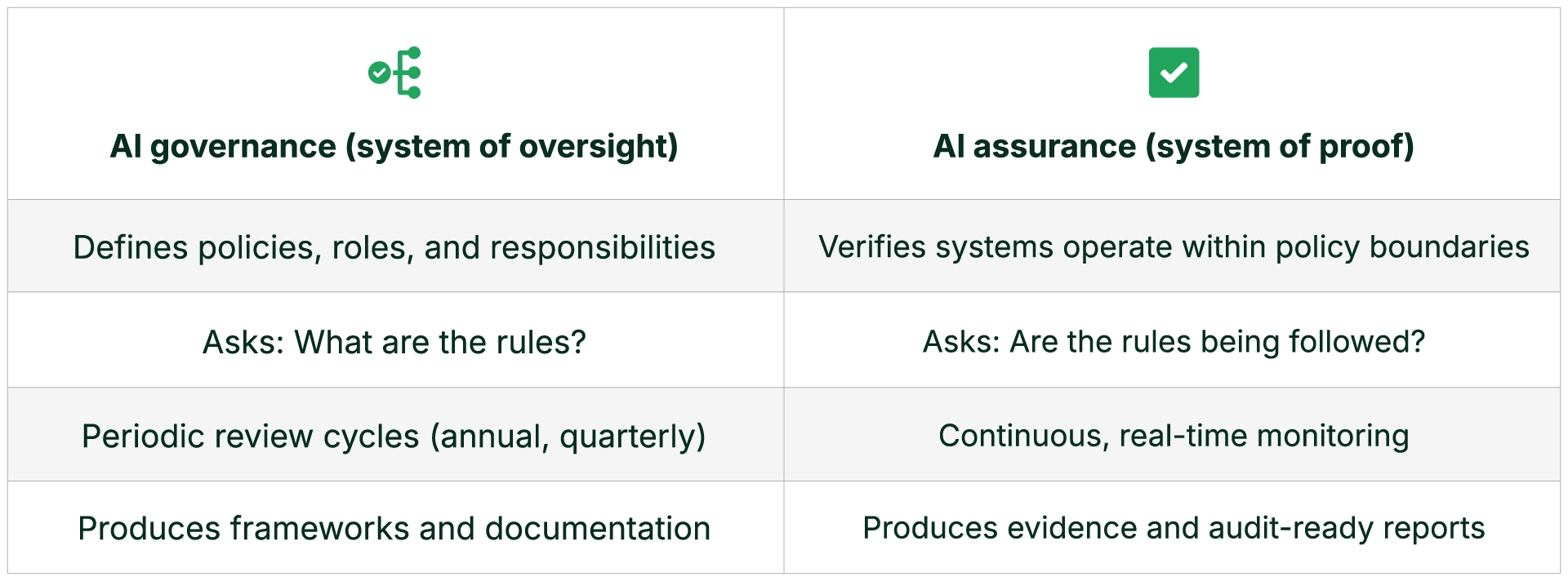

Closing the gap requires understanding why it exists. Most organizations have invested in AI Governance but not in AI Assurance.

A regulator requests evidence that a pricing model does not produce disparate impact across protected classes. The insurer has a governance policy requiring fairness testing, but no centralized system linking model versions, training data, and decision outputs. Producing evidence requires weeks of manual reconstruction. The examination finding cites inadequate controls. The policy existed. The proof did not.

Governance without assurance is aspiration. Assurance without governance is ad hoc. Enterprises need both, operating as a single system.

What Leaders Should Do Now

- Establish a complete inventory of AI systems in production. This is the first thing regulators will ask for across every regulated sector.

- Identify high-risk decision use cases subject to regulatory scrutiny. Pricing, underwriting, claims, credit decisions, clinical recommendations, and government-facing outputs demand the highest level of oversight.

- Implement continuous monitoring, logging, and fairness testing. Periodic reviews are no longer sufficient. Evidence must be generated as a byproduct of normal operations, aligned with frameworks like NIST AI RMF, SR 11-7, and SOC 2.

- Align governance policy with operational controls. Policy without enforcement is aspiration. Controls embedded in the AI stack are what regulators verify.

- Prepare audit-ready evidence workflows before regulators ask. The organizations that produce evidence on demand will navigate examinations faster, with fewer findings and less disruption.

How Gruve and Trussed AI Help Close the Gap

Gruve helps companies design, build, and secure their AI use cases with an enterprise-tailored and outcome-driven approach: laying the foundations for data pipelines, model deployment, enterprise system integration, and security posture. Gruve’s compliance automation capabilities include policy change detection, regulatory mapping, and real-time compliance dashboards across business units and geographies.

Trussed AI provides the assurance layer. Trussed Controller is a drop-in compliance platform that wraps around any AI model to monitor every prompt and response in real time. It detects offensive content, political bias, hallucinations, and misinformation. It provides code provenance detection, identifying when AI-generated outputs contain source code from open-source projects subject to licensing obligations. Trussed enforces policy-based access controls and generates continuous compliance evidence aligned with the NIST AI Risk Management Framework.

Together, Gruve and Trussed ensure the AI foundation is compliant, secure, and well-governed. Trussed AI ensures the AI systems running on that foundation are continuously monitored and producing evidence of compliance. Governance and assurance, working as a single system.

The Bottom Line

Having an AI policy was a good start. In 2026, it is no longer enough. The enterprises that close the AI Governance-Assurance Gap will not only manage risk more effectively. They will move faster, win more deals, and build the regulatory confidence needed to unlock AI’s full potential.

This is the first in a series of articles exploring AI compliance across regulated industries. Upcoming posts will take a deeper look at insurance, healthcare, financial services, and government contracting.

About Trussed AI

Trussed AI offers a comprehensive compliance platform for enterprises in regulated industries. Trussed Controller wraps around any AI model to provide real-time monitoring, code provenance detection for open-source license compliance, policy-based access controls, and continuous compliance evidence generation. Learn more at www.trussed.ai.

About Gruve

Gruve helps enterprises, neoclouds and AI startups build and operate infrastructure-to-agent AI solutions where technical performance and business ROI converge. Through service offerings spanning AI Infrastructure, Data Foundation, Inference Infrastructure Fabric, and AI Application Accelerator, Gruve enables scalable, secure, and measurable AI execution in production.

Learn more at www.gruve.ai.

Media Contact

Name: Ash Bao

E-mail: press@gruve.ai